Running jobs on EC2¶

rllab comes with support for running jobs on EC2 cluster. Here are the steps to set it up:

- Create an AWS account at https://aws.amazon.com/. You need to supply a valid credit card (or set up consolidated billing to link your account to a shared payer account) before running experiments.

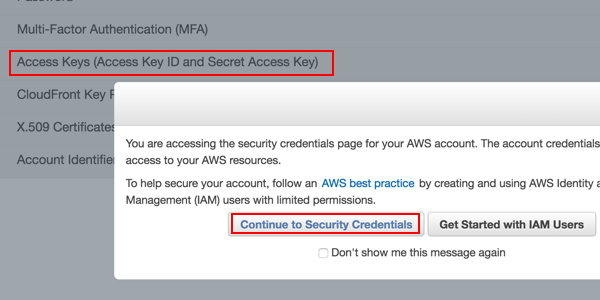

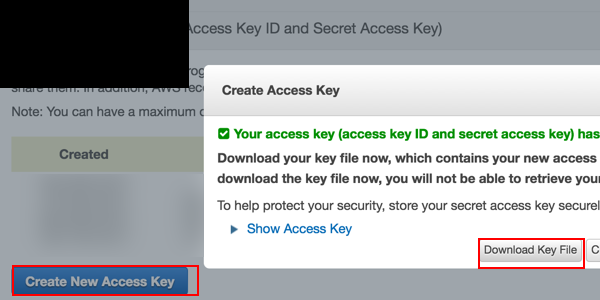

- After signing up, go to the Security Credentials console, click on the “Access Keys” tab, and create a new access key. If prompted with “You are accessing the security credentials page for your AWS account. The account credentials provide unlimited access to your AWS resources,” click “Continue to Security Credentials”. Save the downloaded

root_key.csvfile somewhere.

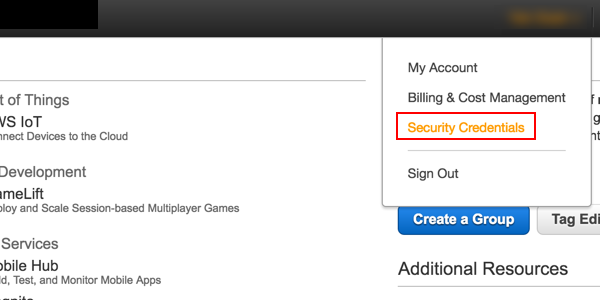

Click on the Security Credentials tab.

Click “Continue to Security Credentials” if prompted. Then, click the Acces Keys tab.

Click “Create New Access Key”. Then download the key file.

- Set up environment variables. On Linux / Mac OS X, edit your

~/.bash_profileand add the following environment variables:

export AWS_ACCESS_KEY="(your access key)"

export AWS_ACCESS_SECRET="(your access secret)"

export RLLAB_S3_BUCKET="(think of a bucket name)"

For RLLAB_S3_BUCKET, come up with a name for the S3 bucket used to store your experiment data. See here for rules for bucket naming. You don’t have to manually create the bucket as this will be handled by the script later. It should be of sufficient length to be unique.

- Install the AWS command line interface. Set it up using the credentials you just downloaded by running

aws configure. Alternatively, you can edit the file at~/.aws/credentials(create the folder / file if it does not exist) and set its content to the following:

[default]

aws_access_key_id = (your access key)

aws_secret_access_key = (your access secret)

Note that there should not be quotes around the keys / secrets!

- Open a new terminal to make sure that the environment variables configured are in effect. Also make sure you are using the Py3 version of the environment (

source activate rllab3). Then, run the setup script:

python scripts/setup_ec2_for_rllab.py

- If the script runs fine, you’ve fully set up rllab for use on EC2! Try running

examples/cluster_demo.pyto launch a single experiment on EC2. If it succeeds, you can then comment out the last linesys.exit()to launch the whole set of 15 experiments, each on an individual machine running in parallel. You can sign in to the EC2 panel to view spot instance requests status or running instances. - While the experiments are running (or when they are finished), use

python scripts/sync_s3.py first-expto download stats collected by the experiments. You can then runpython rllab/viskit/frontend.py data/s3/first-expand navigate to http://localhost:5000 to view the results.